Optimal BFS: mark on discovery, not on dequeue

Probably the single most instructive Leetcode problem I’ve solved is LC 1091 Shortest Path in Binary Matrix. The problem itself is rather straightforward. Its educational value comes in insisting on the optimal implementation of breadth-first search (BFS) — it TLEs (for “Time Limit Exceeded”) on a suboptimal implementation. Specifically, the pattern it enforces is marking a node visited on discovery, not dequeue.

Many problems could teach you techniques like dynamic programming, two pointers, and backtracking, or how to use data structures like prefix trees, heaps, and union find. However, despite solving my fair share of graph problems, I have never come across a problem that enforces this node marking pattern. For this reason, LC 1091 is the most learning-per-solution problem I have solved.

Here is the problem:

Given an

n x nbinary matrix grid, return the length of the shortest “clear path” in the matrix. If there is no clear path, return-1.A clear path in a binary matrix is a path from the top-left cell (i.e.,

(0, 0)) to the bottom-right cell (i.e.,(n - 1, n - 1)) such that:

- All the visited cells of the path are

0.- All the adjacent cells of the path are 8-directionally connected (i.e., they are different and they share an edge or a corner).

The length of a clear path is the number of visited cells of this path.

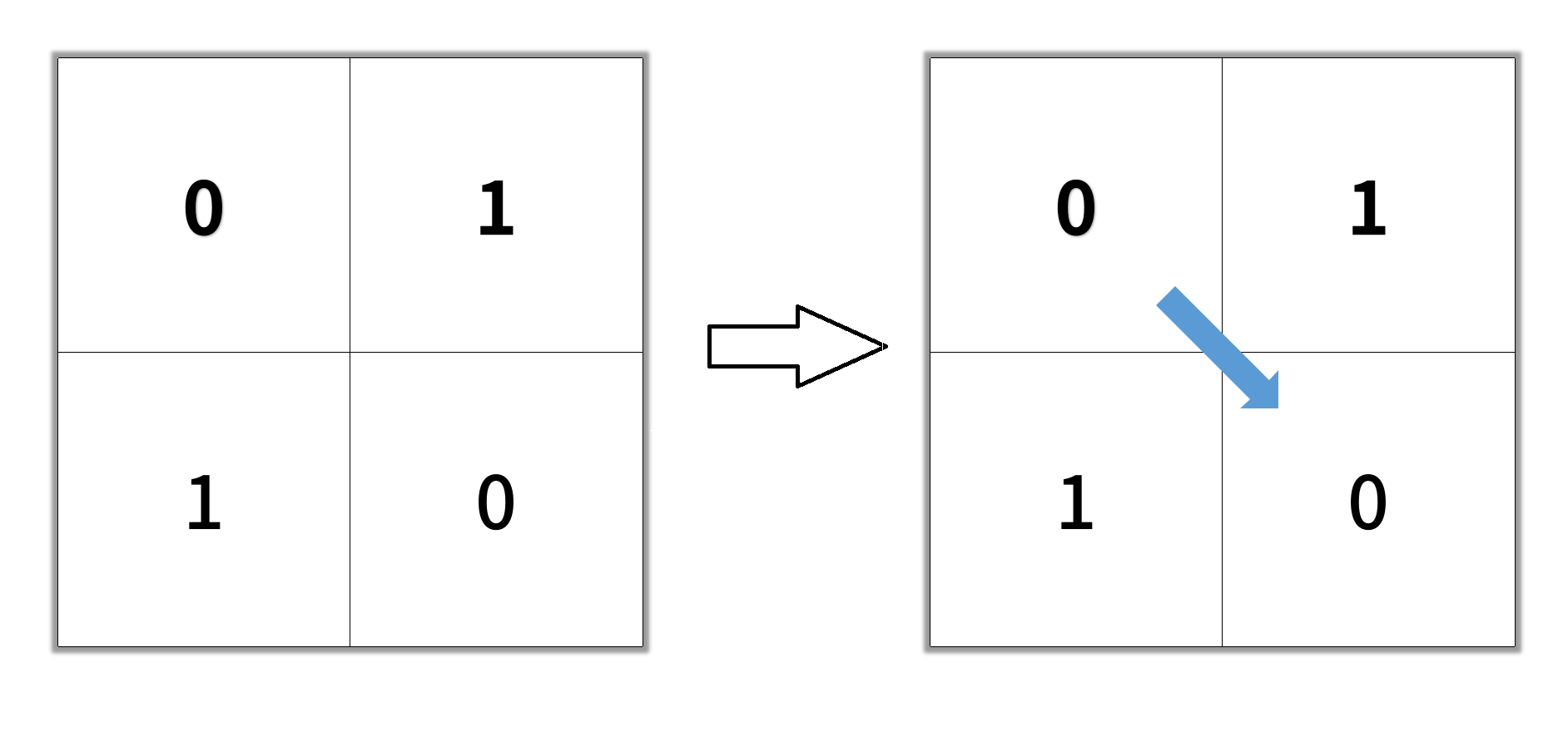

Example 1:

Input:

grid = [[0,1],[1,0]]Output:

2Example 2:

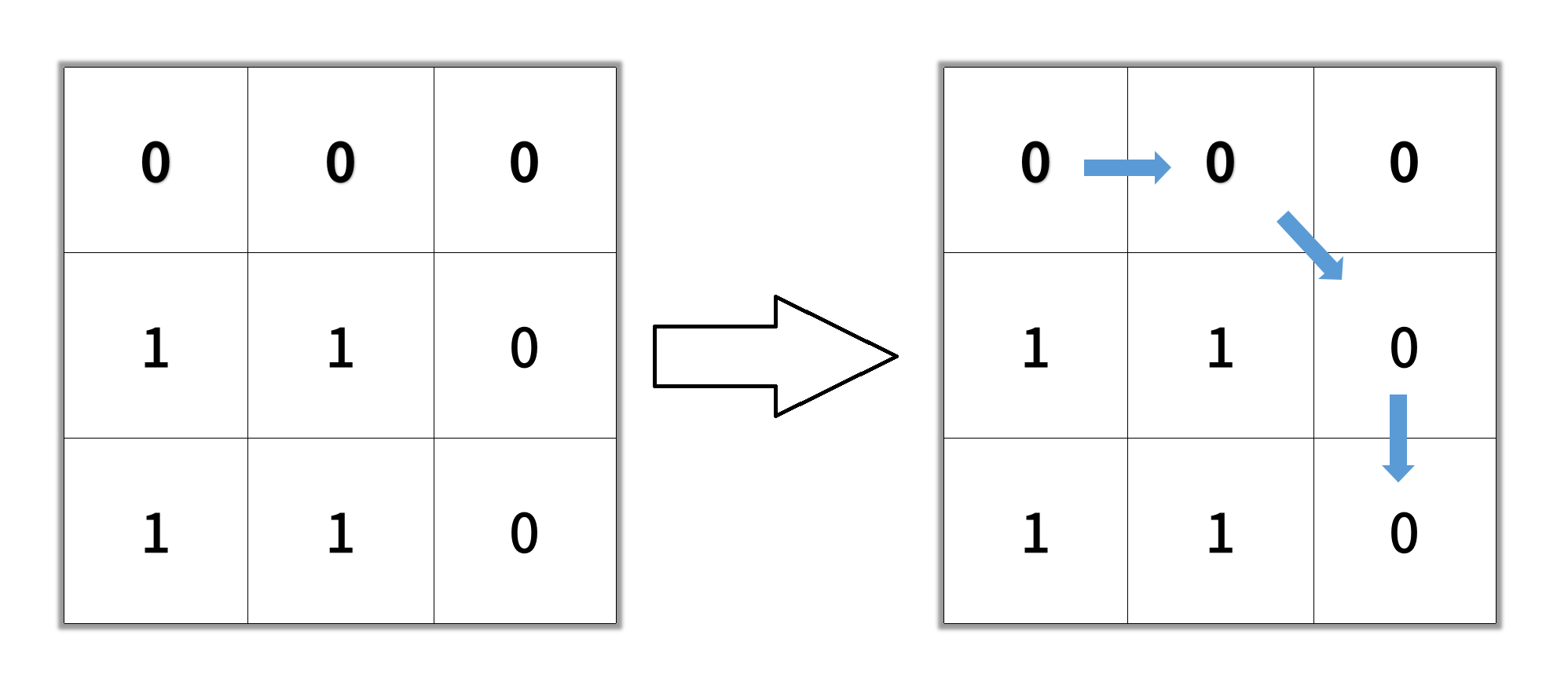

Input:

grid = [[0,0,0],[1,1,0],[1,1,0]]Output:

4Example 3:

Input:

grid = [[1,0,0],[1,1,0],[1,1,0]]Output:

-1Constraints:

n == grid.lengthn == grid[i].length1 <= n <= 100grid[i][j]is0or1

Here is the naive solution:

class Solution:

def shortestPathBinaryMatrix(self, grid: List[List[int]]) -> int:

n = len(grid)

nodeQ = deque()

if grid[0][0] == 0:

nodeQ.append((0, 0, 1))

while nodeQ:

r, c, pathLen = nodeQ.popleft()

if r == c == n - 1:

return pathLen

grid[r][c] = 2 # mark visited

for adjRow, adjCol in (

(r - 1, c - 1), (r - 1, c), (r - 1, c + 1),

(r, c - 1), (r, c + 1),

(r + 1, c - 1), (r + 1, c), (r + 1, c + 1),

):

if (

0 <= adjRow < n and

0 <= adjCol < n and

grid[adjRow][adjCol] == 0

):

nodeQ.append((adjRow, adjCol, pathLen + 1))

return -1

In words: start from the top-left cell and explore outward using BFS, visiting

all 8 neighbors of each cell. Since BFS explores nodes in order of distance, the

first time we reach the bottom-right cell, we have found the shortest path. We

mark cells as visited (setting them to 2) to avoid revisiting them.

However, this solution TLEs because it marks a node as visited only when

dequeueing it with popleft. This can lead to the same node being enqueued and

processed multiple times, if it’s neighboring multiple nodes in the BFS

frontier.

The visualization above runs both strategies on the same open 6x6 grid: on the left, each cell is marked visited the moment it is enqueued; on the right, it is marked only when dequeued. Watch the queue on the right: it fills up with duplicate entries because multiple frontier nodes independently discover the same neighbor and each enqueues it before any copy is dequeued.

With 8-directional movement, a single cell can be neighbor to up to 8 other cells. Any of those that are dequeued before it will enqueue it again, since it hasn’t been marked visited yet. Each duplicate is later dequeued and skipped, but the cost to wastefully enqueue-then-immediately-dequeue adds up. The bloated queue also leads to a memory blowup. Even on this small 6x6 grid, the mark-on-dequeue strategy produces 101 steps and 111 total enqueues, compared to just 37 steps and 36 enqueues for mark-on-discovery. On the 100x100 grids in LC 1091, this blowup causes TLE.

The fix is a small rearrangement. Mark the cell visited eagerly when you enqueue it, not when you dequeue it:

class Solution:

def shortestPathBinaryMatrix(self, grid: List[List[int]]) -> int:

n = len(grid)

nodeQ = deque()

if grid[0][0] == 0:

nodeQ.append((0, 0, 1))

grid[0][0] = 2 # mark visited on discovery

while nodeQ:

r, c, pathLen = nodeQ.popleft()

if r == c == n - 1:

return pathLen

for adjRow, adjCol in (

(r - 1, c - 1), (r - 1, c), (r - 1, c + 1),

(r, c - 1), (r, c + 1),

(r + 1, c - 1), (r + 1, c), (r + 1, c + 1),

):

if (

0 <= adjRow < n and

0 <= adjCol < n and

grid[adjRow][adjCol] == 0

):

grid[adjRow][adjCol] = 2 # mark visited on discovery

nodeQ.append((adjRow, adjCol, pathLen + 1))

return -1

The visited mark moves from after the popleft to the point where each neighbor

is enqueued. This guarantees that every cell enters the queue exactly once,

keeping the time complexity at a clean O(n^2) for an n x n grid.

Depth-first search

Note that you don’t need to worry about this implementation subtlety with (recursive) depth-first search (DFS), where processing happens immediately at discovery. There’s no queue where a node sits waiting while other frontier nodes can rediscover and enqueue it. It’s the window between “discovered” and “processed” that creates the problem in BFS, and that window just doesn’t exist in DFS. See this toy code:

# DFS: mark and recurse happen together, no gap

def dfs(node):

visited[node] = True

for neighbor in adj[node]:

if not visited[neighbor]:

dfs(neighbor)

However, if you write an iterative DFS with a stack and push all neighbors before popping, you get the same problem with duplicates.

Bad implementation:

def dfs(graph, start):

stack = [start]

visited = set()

while stack:

node = stack.pop()

if node in visited:

continue

visited.add(node) # mark on pop (same problem as BFS mark-on-dequeue)

for neighbor in graph[node]:

if neighbor not in visited:

stack.append(neighbor)

Good implementation:

def dfs(graph, start):

stack = [start]

visited = {start} # mark on push

while stack:

node = stack.pop()

for neighbor in graph[node]:

if neighbor not in visited:

visited.add(neighbor) # mark on push

stack.append(neighbor)

The bottom line: whenever you’re writing a graph search implementation, mark nodes visited on discovery, not on dequeue. For the most natural implementation of DFS (recursive), this gets handled automatically. But the most natural implementation of BFS is iterative, and you need to keep in mind node marking hygiene.